Force any OpenAI-compatible tool to use Gemini, Groq, Ollama, or DeepSeek instantly.

llm-env creates a unified interface for your AI development. It automatically maps provider-specific keys (like GEMINI_API_KEY) to the standard OPENAI_API_KEY (or ANTHROPIC_API_KEY for Anthropic-protocol providers) in your current shell session, and sets the matching base URL and default model alongside it.

Stop editing .env files. Stop hardcoding providers. Just switch.

- ⚡️ Instant Context Switching: Changes apply immediately. No need to manually

sourcefiles or restart your shell. - 🔌 Universal Adapter: Aliases provider-specific keys (e.g.,

GEMINI_API_KEY) toOPENAI_API_KEY, making almost any tool work with any provider. - 🛠️ Tech Stack Agnostic: Works with

curl(orwgetfor testing), Pythonopenailibrary, LangChain, Node.js, and CLI tools likeaichatorfabric.

New in v1.5: llm-env quickstart — a one-time setup command that adds the current "Recommended" coding models from Synthetic and Alibaba Cloud Coding Plan to your config, on both OpenAI and Anthropic protocols. Pair with Claude Code to get a Claude-Code-compatible workflow on Kimi, GLM, MiniMax, Qwen, DeepSeek, and more — without needing an Anthropic API key. The model lists in this repo are refreshed daily; re-run quickstart after a fresh git pull to pick up new entries. See CHANGELOG.md for full release notes.

v1.2.0: Native Anthropic protocol support — exports ANTHROPIC_* environment variables (including the ANTHROPIC_DEFAULT_OPUS_MODEL / ANTHROPIC_DEFAULT_SONNET_MODEL / ANTHROPIC_DEFAULT_HAIKU_MODEL / CLAUDE_CODE_SUBAGENT_MODEL variables that Claude Code reads) for direct Claude API integration.

Manage credentials for OpenAI, OpenRouter, Cerebras, Groq, and 15+ other providers.

llm-env maps these services to the industry-standard OPENAI_* environment variables (or native ANTHROPIC_* variables for Anthropic providers), ensuring compatibility with almost any tool. API keys are stored securely in your shell profile, never in code.

Pure Bash. Zero Dependencies. Just works (curl or wget required for connectivity testing).

If you work with multiple AI providers, you've likely experienced these pain points:

- Multiple providers, different endpoints: Each provider has unique API endpoints and authentication methods

- OPENAI_ is the standard*: Most AI tools expect OPENAI_* environment variables, but not every provider uses those names

- Constant configuration editing: You end up editing ~/.bashrc or ~/.zshrc repeatedly

- Context switching kills flow: Small mistakes cause mysterious 401s/404s, breaking your development rhythm

- Configuration drift: Different setups across development, staging, and production environments

llm-env quickstart is an interactive setup command that adds the "Recommended" coding models from two providers to your config and walks you through getting an API key:

- Synthetic — Kimi, GLM, MiniMax, DeepSeek, Qwen, Llama, GPT-OSS, and more, all hosted behind one subscription.

- Alibaba Cloud Coding Plan — the four models Alibaba currently recommends for coding (today:

qwen3.6-plus,kimi-k2.5,glm-5,MiniMax-M2.5).

Both providers serve the same models on OpenAI-compatible and Anthropic-compatible endpoints, so each model becomes addressable from any tool you already use — including Claude Code.

llm-env quickstartYou'll be asked which catalog(s) to add (Synthetic, Alibaba, or both). For each one you pick, you'll get the signup URL with the embedded referral code, then a hidden prompt for your API key — which gets appended to your shell rc file (~/.bashrc or ~/.zshrc) so it persists across shells. Each key is then verified with a tiny test call to confirm it works.

If you'd rather script it, pass the source(s) as a positional arg:

llm-env quickstart synthetic # only Synthetic

llm-env quickstart alibaba # only Alibaba

llm-env quickstart synthetic,alibaba # both (same as `all`)

llm-env quickstart all # everything availableWhen stdin isn't a TTY (CI, scripts, piped install), quickstart skips all prompts and just provisions every available catalog — same behavior as before this feature landed.

Once your config is populated, pick a model:

llm-env set synth_kimi # latest Kimi on Synthetic, both protocols

llm-env set synth_qwen-coder # latest Qwen Coder on Synthetic

llm-env set synth_glm-flash # latest GLM Flash (the speed-tuned variant)

llm-env set alibaba_qwen # latest Qwen on Alibaba's Coding Plan

llm-env set anth_synth_kimi-k2.5 # specific model, Anthropic protocol onlyThe full set, naming scheme, and how family-latest aliases work is documented in docs/configuration.md. The model lists in this repo are refreshed daily by an automated job; once you've run quickstart, your config stays as-is unless you re-run it after a git pull. (The parser skips providers that already exist, so re-running is safe and won't touch any sections you've added or edited.)

The headline use case for v1.5: run Claude Code against Kimi, GLM, MiniMax, Qwen, DeepSeek, Llama, and more — through Synthetic or Alibaba's Coding Plan, without an Anthropic API key.

Both providers expose Anthropic-compatible endpoints, and llm-env exports the exact environment variables Claude Code reads to choose its endpoint and model (ANTHROPIC_BASE_URL, ANTHROPIC_API_KEY, ANTHROPIC_MODEL, ANTHROPIC_DEFAULT_OPUS_MODEL / SONNET / HAIKU, CLAUDE_CODE_SUBAGENT_MODEL). So switching is a single command in the same shell you'll run claude from:

llm-env set anth_synth_kimi-k2.5 # Claude Code talks to Kimi K2.5 via Synthetic

claude

llm-env set anth_alibaba_qwen3.6-plus # …or Qwen 3.6 via Alibaba Coding Plan

claude

llm-env unset # clear all overrides — Claude Code falls back

claude # to its own native login (real Claude)Full walkthrough: docs/claude-code-quickstart.md covers install → quickstart → sign up for Synthetic or Alibaba → set provider → run claude. Eight steps, ~5 minutes.

Power users: if you want to drive Claude Code against the real Anthropic API directly (with your own

LLM_ANTHROPIC_API_KEY), the bundled[anthropic]provider supports that —llm-env set anthropic. But for most users,llm-env unsetplus Claude Code's native login is simpler.

The installer just installs. Once llm-env is on your PATH, you can optionally run:

llm-env quickstartto add the Claude-compatible models from Synthetic and Alibaba Cloud Coding Plan. This is opt-in — if you already have your own provider keys (OpenAI, Cerebras, Groq, etc.), you can skip it and just llm-env list.

This tool supports any OpenAI API compatible provider, including:

- OpenAI: Industry standard GPT models

- Anthropic: Native Claude API support with

ANTHROPIC_*variables - Cerebras: Lightning-fast inference with competitive pricing

- Groq: Very-fast inference

- Gemini: Google Gemini models with native OpenAI compatibility

- Synthetic: Flat-rate subscription for Kimi, GLM, MiniMax, Qwen, DeepSeek, Llama, and more

- Alibaba Cloud Coding Plan: Flat-rate coding subscription for Qwen, Kimi, GLM, MiniMax

- Chutes: Flat-rate access to a broad open-model catalog

- Featherless: Flat-rate unlimited inference across thousands of HuggingFace models

- OpenRouter: Access to multiple models through one API

- xAI Grok: Advanced reasoning and coding capabilities

- DeepSeek: Excellent coding and reasoning models

- Together AI: Competitive pricing with wide model selection

- Fireworks AI: Ultra-fast inference optimized for production

- And any OpenAI API compatible provider!

# Default: user install. Falls back to ~/.local/bin if /usr/local/bin isn't writable.

curl -fsSL https://raw.githubusercontent.com/samestrin/llm-env/main/install.sh | bash

# System-wide install (writes to /usr/local/bin):

curl -fsSL https://raw.githubusercontent.com/samestrin/llm-env/main/install.sh | sudo bashIf the installer falls back to ~/.local/bin and that directory isn't on your PATH, the next-steps output will tell you the exact export PATH=... line to add to your shell rc file.

-

Clone this repository:

git clone https://github.com/samestrin/llm-env.git cd llm-env -

Copy the script to your PATH:

sudo cp llm-env /usr/local/bin/ sudo chmod 755 /usr/local/bin/llm-env

-

Add the helper function to your shell profile (

~/.bashrcor~/.zshrc):# LLM Environment Manager llm-env() { source /usr/local/bin/llm-env "$@" }

-

Set up your API keys in your shell profile:

# Add these to ~/.bashrc or ~/.zshrc export LLM_CEREBRAS_API_KEY="your_cerebras_key_here" export LLM_OPENAI_API_KEY="your_openai_key_here" export LLM_GROQ_API_KEY="your_groq_key_here" export LLM_OPENROUTER_API_KEY="your_openrouter_key_here"

-

Reload your shell:

source ~/.bashrc # or ~/.zshrc

# List all available providers

llm-env list

# Set a provider (switches all OpenAI-compatible env vars)

llm-env set cerebras

llm-env set openai

llm-env set groq

# Show current configuration

llm-env show

# Unset all LLM environment variables

llm-env unset

# Get help

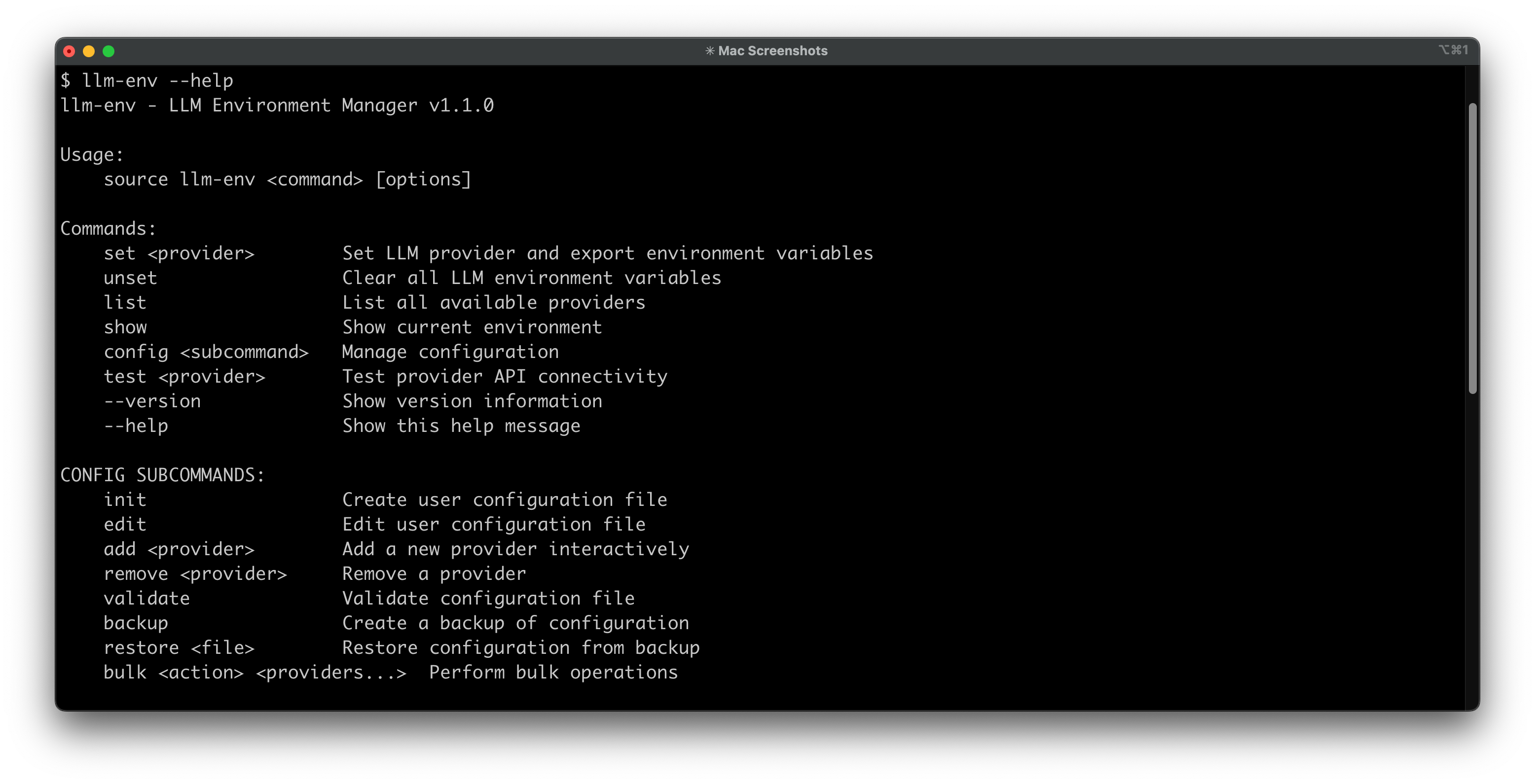

llm-env --help

# Test provider connectivity

llm-env test cerebras# Start with free tier

llm-env set openrouter2 # Uses deepseek free model (if you are using the default config)

# When free tier is exhausted, switch to paid

llm-env set cerebras # Fast and affordable

# For specific tasks, use specialized models

llm-env set groq # For speed

llm-env set openai # For qualityOnce you've set a provider, any tool using OpenAI-compatible environment variables will work:

# With curl

curl -H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{"model":"'$OPENAI_MODEL'","messages":[{"role":"user","content":"Hello!"}]}' \

$OPENAI_BASE_URL/chat/completions# With Python OpenAI client

python -c "import openai; print(openai.chat.completions.create(model=os.environ['OPENAI_MODEL'], messages=[{'role':'user','content':'Hello!'}]))"qwen -p "What is the capital of France?" # Uses current provider automatically# Set up for development

llm-env set cerebras # Fast and cost-effective for testing

# Test your application

./your-app.py

# Switch to production model when ready

llm-env set openai # Higher quality for production# Configure different providers for different tasks

llm-env set deepseek # Excellent for code generation

llm-env set groq # Fast inference for real-time apps

llm-env set openai # Complex reasoning tasks

# Set multiple providers at once (e.g., OpenAI + Anthropic)

llm-env set cerebras,anthropic

# Or define a group in your config and set it by name

llm-env set default # Sets all providers in the "default" group

# Switch between providers as needed

llm-env list # See all available providers and groups

llm-env show # Check current configuration# Use with curl

curl -H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

$OPENAI_BASE_URL/models

# Use with Python scripts

python your_script.py # Uses current provider automatically

# Test connectivity

llm-env test cerebras # Verify provider is workingThe script uses a flexible configuration system that allows you to customize providers and models without modifying the script itself.

# Create a user configuration file

llm-env config init

# Edit your configuration

llm-env config edit# Add a new provider

llm-env config add my-provider

# Validate configuration

llm-env config validate

# Backup configuration

llm-env config backup

# Restore from backup

llm-env config restore /path/to/backup.conf

# Bulk operations

llm-env config bulk enable cerebras openai

llm-env config bulk disable groq openrouterDefine groups to set multiple providers with a single command:

# In ~/.config/llm-env/config.conf

[group:default]

providers=cerebras,anthropic# Sets both cerebras (OPENAI_*) and anthropic (ANTHROPIC_*) at once

llm-env set default

# Or use comma-separated providers directly (no config needed)

llm-env set cerebras,anthropicFor detailed configuration options, examples, and advanced setup, see the Configuration Guide

# Verify setup

llm-env list

llm-env show

# Test API connectivity

llm-env test cerebras

# Enable debug mode for detailed troubleshooting

LLM_ENV_DEBUG=1 llm-env list

# Or manual test

curl -H "Authorization: Bearer $OPENAI_API_KEY" $OPENAI_BASE_URL/modelsFor detailed troubleshooting, common issues, and solutions, see the Troubleshooting Guide

llm-env is written in Bash so it runs anywhere Bash runs—macOS, Linux, containers, CI—without asking you to install Python or Node first. It's intentionally compatible with older shells and includes compatibility shims for legacy behavior.

Universal Compatibility:

- Works out-of-the-box on macOS's default Bash 3.2 and modern Bash 5.x installations

- Linux distros with Bash 4.0+ are fully supported

- Backwards-compatible layer ensures features like associative arrays "just work," even on Bash 3.2

- Verified by automated test matrix across Bash 3.2, 4.0+, and 5.x on macOS and Linux

Security Benefits:

- Keys live in environment variables—never written to config files

- Outputs are masked (e.g., ••••abcd) to keep secrets safe on screen and in screenshots

- Switching is local; nothing is sent over the network except your own API calls during tests

- No external dependencies means fewer attack vectors

Complete documentation is available in the docs directory:

- Configuration Guide - Detailed setup and customization

- Troubleshooting Guide - Common issues and solutions

- Development Guide - Contributing and development

- Compatible Tools - Applications that work with llm-env

Comprehensive test suite ensures reliability across platforms and Bash versions:

# Run all tests

./tests/run_tests.sh

# Run specific test suites

./tests/run_tests.sh --unit-only

./tests/run_tests.sh --integration-only

./tests/run_tests.sh --system-only

# Run individual test files

bats tests/unit/test_validation.bats

bats tests/integration/test_providers.bats- Unit Tests (

tests/unit/) - Core functionality and validation - Integration Tests (

tests/integration/) - Provider management and configuration - System Tests (

tests/system/) - Cross-platform compatibility and edge cases - Regression Tests - Prevent known issues from reoccurring

All test suites passing across supported platforms:

- Unit Tests: 40/40 passing

- Integration Tests: 13/13 passing

- System Tests: 40/40 passing

- Total Coverage: 93 test cases

Platform Support:

- macOS (Bash 3.2+ and 5.x)

- Ubuntu/Linux (Bash 4.0+)

- Multi-version compatibility testing

- BATS testing framework

- Bash 3.2+ (automatically tested across versions)

- No external dependencies required for basic tests

For Anthropic-protocol tools: llm-env exports the ANTHROPIC_* environment variables that Anthropic-protocol tools read, so you can point them at non-Anthropic models — no Anthropic API key required.

1. Claude Code

Run Claude Code against any Anthropic-compatible endpoint — no Anthropic API key required. The quickstart command sets you up on Synthetic and Alibaba Coding Plan, which between them give you Kimi, GLM, Qwen, DeepSeek, MiniMax, and more behind one subscription each. Provider-direct APIs (e.g. Kimi, MiniMax) also work.

llm-env set anth_synth_kimi-k2.5 # Claude Code now talks to Kimi via Synthetic

claudeFull walkthrough: docs/claude-code-quickstart.md.

For OpenAI-protocol tools: llm-env is the missing bridge for tools that default to OpenAI. It has been verified to work instantly with:

2. Aider (AI Pair Programmer)

Force Aider to use cheaper/faster models via the generic OpenAI interface without complex flags.

llm-env set groq

# Now Aider uses Groq's Llama 3 via the OpenAI compatibility layer

aider --model openai/llama3-70b-8192Stop manually passing --api_base and --api_key arguments.

llm-env set cerebras

interpreter -y # Runs at lightning speed4. Fabric

Use Fabric patterns with any provider without editing configuration files.

llm-env set gemini

echo "Explain quantum computing" | fabric --pattern explain- Simon Willison's llm: The CLI tool for managing LLMs.

- LangChain: Perfect for testing generic OpenAI chains against other providers.

- LiteLLM: Simplifies calling all LLM APIs.

- Qwen-Code: See my qwen-prompts repo for hybrid prompt chaining setups.

Additional: Applications, Scripts, and Frameworks compatible with llm-env

Contributions are welcome! See the Development Guide for details on:

- Adding new providers

- Improving functionality

- Testing and validation

- Code style guidelines

Current Version: 1.6.0

For detailed version history, feature updates, and breaking changes, see CHANGELOG.md.

If you find llm-env useful, please consider starring the repository and supporting the project:

MIT License - see LICENSE file for details.